I've thought about this quite a bit, and have arrived at these conclusions.

The longer the range you can use to zero the optic, the "flatter" will be the trajectory of the collimated laser beam to the bullet. The shorter the range, the more angular will be the beam.

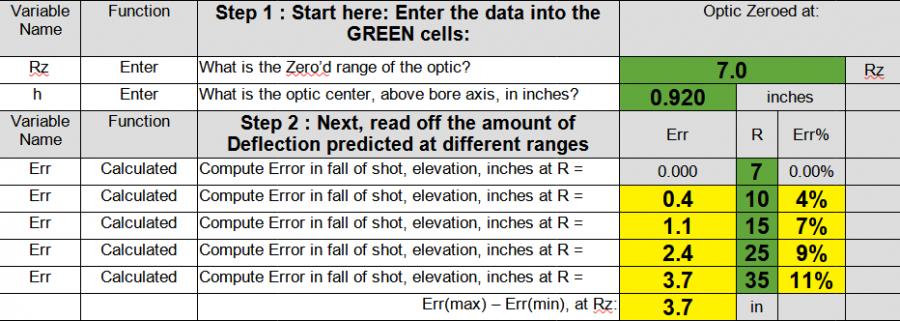

Numerically, I computed error of shot placement, with perfect geometry (i.e. bullet travels straight) for a typical deck height (Holosun 507c on a FCD Plate on a G34). I have a spreadsheet with this stuff, but will cut in some examples.

Here is a portion with the definitions and 7 yard zero numbers:

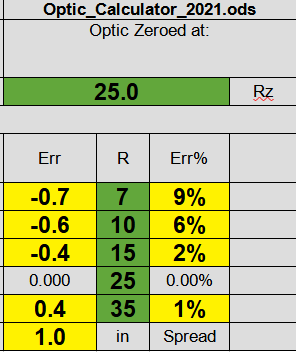

Here is the run for 25:

What does this mean?

Well, basically the math suggests the "range" of errors in sight vs. bullet placement will be minimized at 25 (1.0" spread) vs if you zero at 10 yards (2.6") (bear in mind I compute the error out to 35 yards.) So a longer zero will result in less error, overall.

But if course your situation may vary. You may not be able to hold a group at 25. You may not choose to shoot off a rest. You may prefer a zero at "typical" CCW engagement ranges of 3-7 yards. Many things may go into your choice of how you zero.

"For me", I can just about hold a group at 10 that's meaningful, in terms of trigger control. I would like to be better, but that's where I am. I also prefer to shoot 2 hand unsupported, since that's how I intend to use the gun in my situation (random retired .civ dude trying to stay off YouTube).

So at the end of the day, I use a target I designed for my sights for 10, and then try and confirm out to both 25 yards on an NRA B-8, as well as on 1" squares closer in at like 7 yards.

My_MRDS_ZERO_AT_10.pdf

Reply With Quote

Reply With Quote